Leveraging speaker attribute information using multi task learning for speaker verification and diarization

(Oral presentation)

| Chau Luu (University of Edinburgh, UK), Peter Bell (University of Edinburgh, UK), Steve Renals (University of Edinburgh, UK) |

|---|

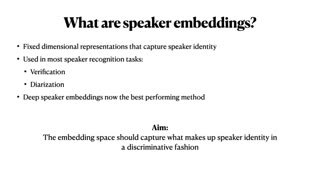

Deep speaker embeddings have become the leading method for encoding speaker identity in speaker recognition tasks. The embedding space should ideally capture the variations between all possible speakers, encoding the multiple acoustic aspects that make up a speaker’s identity, whilst being robust to non-speaker acoustic variation. Deep speaker embeddings are normally trained discriminatively, predicting speaker identity labels on the training data. We hypothesise that additionally predicting speaker-related auxiliary variables — such as age and nationality — may yield representations that are better able to generalise to unseen speakers. We propose a framework for making use of auxiliary label information, even when it is only available for speech corpora mismatched to the target application. On a test set of US Supreme Court recordings, we show that by leveraging two additional forms of speaker attribute information derived respectively from the matched training data, and VoxCeleb corpus, we improve the performance of our deep speaker embeddings for both verification and diarization tasks, achieving a relative improvement of 26.2% in DER and 6.7% in EER compared to baselines using speaker labels only. This improvement is obtained despite the auxiliary labels having been scraped from the web and being potentially noisy.