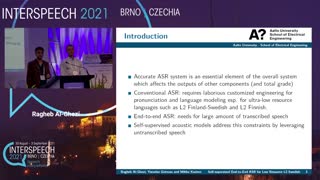

Self-supervised End-to-End ASR for Low Resource L2 Swedish

(Oral presentation)

| Ragheb Al-Ghezi (Aalto University, Finland), Yaroslav Getman (Aalto University, Finland), Aku Rouhe (Aalto University, Finland), Raili Hildén (University of Helsinki, Finland), Mikko Kurimo (Aalto University, Finland) |

|---|

Unlike traditional (hybrid) Automatic Speech Recognition (ASR), end-to-end ASR systems simplify the training procedure by directly mapping acoustic features to sequences of graphemes or characters, thereby eliminating the need for specialized acoustic, language, or pronunciation models. However, one drawback of end-to-end ASR systems is that they require more training data than conventional ASR systems to achieve similar word error rate (WER). This makes it difficult to develop ASR systems for tasks where transcribed target data is limited such as developing ASR for Second Language (L2) speakers of Swedish. Nonetheless, recent advancements in self-supervised acoustic learning, manifested in wav2vec models [1, 2, 3], leverage the available untranscribed speech data to provide compact acoustic representation that can achieve low WER when incorporated in end-to-end systems. To this end, we experiment with several monolingual and cross-lingual self-supervised acoustic models to develop end-to-end ASR system for L2 Swedish. Even though our test is very small, it indicates that these systems are competitive in performance with traditional ASR pipeline. Our best model seems to reduce the WER by 7% relative to our traditional ASR baseline trained on the same target data.